Is it possible to convey an actor’s emotions using first person video?

Triggering emotions can reinforce your intended brand associations by accelerating the subconscious, associative learning process. In collaboration with Red Bull, Mindspeller started an investigation to better understand if and how marketeers can use video to leverage emotions experienced by the actor.

THE SETUP

For this study our friends at Red Bull asked us if our Brain-Computer-Interfacing (BCI) expertise could help them make videos that convey the actor’s most intense emotions. As a first step in this research, we analyzed with EEG the range and nature of emotions that athletes experience when watching the video from a first person Point-of-View (“POV”) perspective. We were interested to find out to what extent an athlete relives emotions by watching a first person video of her performance. What triggers the most intense feelings? And how can we use our insights to create videos that can convey these emotions? Let’s find out!

THE STUDY

For our pilot study we created two groups of EEG test subjects. The first group representing the actors (i.e., the athlete performing the sport shown in the videos). The second, the spectators (i.e., person who wasn’t directly involved in the sports performance shown in the video). Both subject groups were shown videos from POV versus from spectator perspectives. To make the results scientifically reliable we also used reference videos that were unrelated to any sporting event.

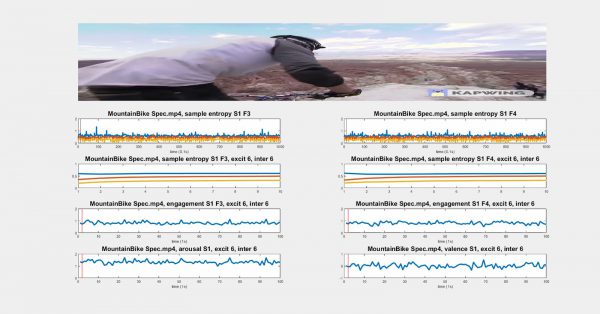

The nature and intensity of our brain activity can be visualized through various band frequencies (cf the wave frequencies shown in the images below). The range of these bands signal a particular state-of-mind. Eg. the Beta band correlates with ‘focus’, the Theta band correlates with ‘non-focus’ and the Mu band correlates with ‘sleep and relaxation’.

Based on the patterns of these brain waves we can derive insights relating to the feelings and emotions of our test subjects experienced while watching/reliving their sporting performance. Thanks to Mindspeller’s BCI signal processing expertise, we can reliably distinguish the EEG signal noise to reveal which video frames trigger which type of emotion.

THE RESULTS

Our pilot study revealed interesting results about the emotions we experience while watching videos. When the actor (who actually performed the sports in real life) watched the video, he or she seemed to relive the scenes. Moreover, the brain showed an interesting increase in activity, not seen in the EEG recordings of spectators.

The EEG recording for spectator subjects who did not actually perform the sporting event only showed an increase during the most spectacular action scenes. Eg. launching a game-changing attack during a boxing match. This difference in athlete versus spectator EEG profile demonstrates the potential to increase spectator engagement to mimic the emotions experienced by the performing athlete.

What evoked the most intense feelings during the POV videos was a combination of a first person perspective to ‘relive’ the sporting event with an amplification of the most unexpected sensory stimuli experienced from the spectator view (eg. hearing the sound of a snowboard scraping against the rocks). Actor subjects felt as if they were in the moment, reliving the performance. Spectators feel they are taking the first person perspective of the performing athlete.

PRELIMINARY TAKE AWAYS:

- Identify key ‘first-person’ emotional scenes in your video with an EEG brain scan of the performing actor reliving her performance whilst viewing the first person PoV video shortly after the performance.

- Focus on dynamic scenes such as an extreme sporting event or performance to increase viewer engagement.

- Emphasize the most amazing sequences as well as the people and surprising sensory stimuli encountered by the actor during the performance.

STAY TUNED FOR PART II OF THIS EXCITING NEW RESEARCH DURING SUMMER!

Interested in Mindspeller’s Neurosemantics-as-a-Service? Contact us today to learn how we can help you.